AI Fails in

Production Due to

Bad Data

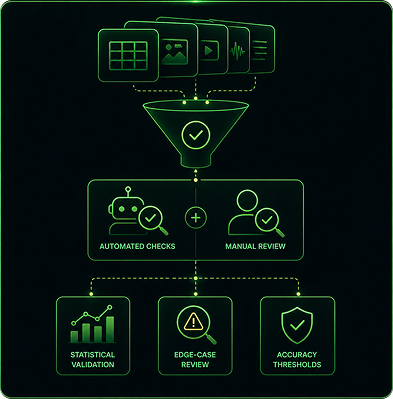

It is not the model architecture. It is not the training framework. It is the data.Failures are caused by uncontrolled annotation pipelines.

Quality issues are discovered too late — after training, after compute cost, after deployment risk has already accumulated.

Inconsistent Model Performance

Without controlled data operations, model accuracy degrades over time. Teams spend months debugging what should have been caught at the data layer.

Inconsistent Model Performance

Annotation scattered across tools, teams, and vendors creates invisible quality gaps. No single point of ownership means no accountability for the outcome.

Quality Breaks

at Scale

What works for 10K samples fails at 1M. Manual QA cannot keep pace with production volumes. Error rates compound exponentially without a system.